Advertising disclosure

Hosting Canada is community-supported. We may earn a commission when you make a purchase through one of our links. Read Disclosure.

What is Robot.txt File?

![]() If you have a WordPress website, you may have seen a robots.txt file. Did you wonder what it is? It’s a file that assists with limiting and controlling website access.

If you have a WordPress website, you may have seen a robots.txt file. Did you wonder what it is? It’s a file that assists with limiting and controlling website access.

The better you understand the robots.txt file, the clearer its helpfulness becomes to you. Moreover, you’ll discover that you can add robots.txt to any WordPress website, allowing you impose your own rules.

Ready to be the boss? Let’s get to it.

What Is Robots.txt in WordPress?

The “robots” in the title refers to the “bots” that crawl the web. The most familiar of these are those that search engines use to rank and index websites.

These bots help your website to figure higher in a SERP. Nonetheless, this doesn’t mean that they should have free access to your website. This was recognized as a problem in the mid-1990s when developers created the robots exclusion standards.

Robots.txt is the embodiment of those standards as it makes it possible for webmasters to define how participating bots interact with their website.

Thanks to robots.txt, webmasters can prevent bots from interacting with their websites. Alternatively, they can restrict access to only certain parts of the website.

It’s critical to note that robots.txt is only effective on “participating” robots. This means that it cannot compel bots to comply with it. If a malicious bot arrives, it will ignore the robots.txt file and its rules.

Even seemingly benign bots may ignore robots.txt rules. For example, bots for Google ignore rules that limit how many times they can visit a particular website.

Why Is the Robots.txt File Important?

Webmasters benefit from a robots.txt file because it tells search engine crawlers which pages on the website to focus on for indexing. This gets the most important pages noticed while less vital pages are ignored. The right rules may also prevent bots from wasting your website’s server resources.

Creating and Editing a Robots.txt File in WordPress

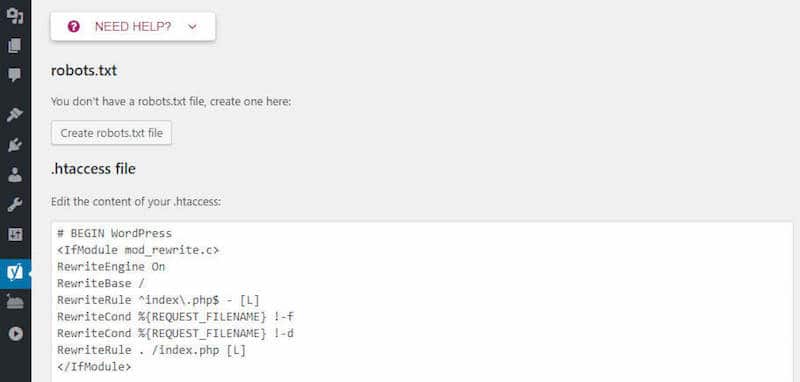

When you create a WordPress site, a virtual robots.txt file is included. Because it’s virtual, it cannot be edited. If you want to edit it, you’ll need to create a physical file.

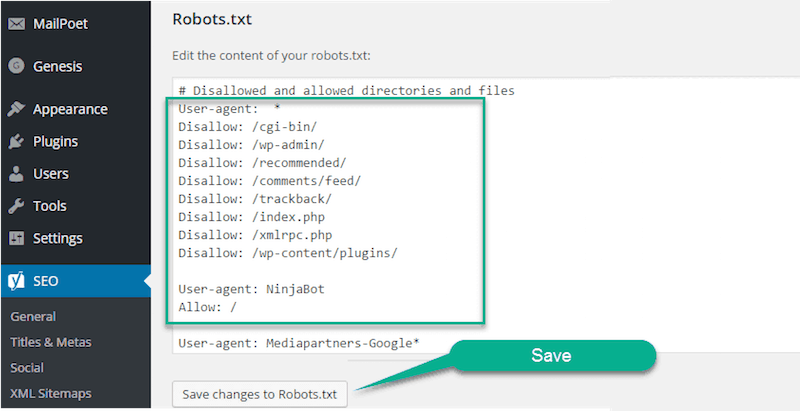

Using the Yoast SEO plugin is one way to accomplish this. Activate the advanced features in Yoast while on WordPress by navigating to SEO, Dashboard and Features. Then, toggle the Advanced Settings pages.

Go back to SEO, choose Tools and then File Editor. One of the options under this selection is Create Robots.txt file.

Those favoring the All In One SEO Pack plugin will simply navigate to Feature Manager and then Activate the Robots.txt file.

Even those who aren’t using an SEO plugin may use SFTP to create a physical robots.txt file. Just create a robots.txt file in the text editor, and then connect to your website using SFTP to upload the robots.txt file to your website’s root folder.

How to Modify Your Robots.txt File

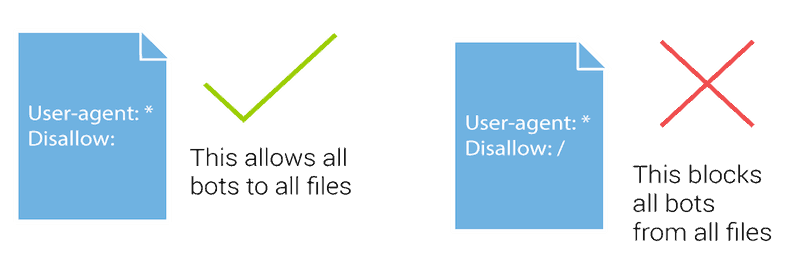

Two commands are at the center of the robots.txt file. The first is “user-agent,” which is the command that enables you to target certain bots. The second is “disallow,” which informs visiting bots that they shouldn’t access certain website areas.

Suppose that you wanted to block a particular bot from accessing your website. For simplicity’s sake, let’s say that you want to block Google’s bots. Here’s what the code would look like:

User-agent: Googlebot Disallow: /

Alternatively, suppose you didn’t want bots to gain access to a certain file or folder on your website. In this example, you don’t want bots to get into the wp-admin folder or the wp-login.php. These are the commands you would use:

User-agent: * Disallow: /wp-admin/ Disallow: /wp-login.php

By using an “*” in the user-agent command, you apply this rule to all bots.

In another scenario, assume that you want bots to be able to access a certain file in a folder that is otherwise disallowed. You would enter these commands:

User-agent: * Disallow: /wp-admin/ Allow: /wp-admin/admin-ajax.php

This codes means that bots can’t access wp-admin with t in the mid-199he exception of the admin-ajax.php file.

Suppose that you wanted to apply certain rules to some bots but not to others. Accordingly, you’d need two rules. The first applies to all bots while the other is applicable only to Googlebots.

User-agent: * Disallow: /wp-admin/ User-agent: Googlebot Disallow: /

Do You Need to Edit Robots.txt?

Casual users of WordPress won’t have much need to modify robots.txt. However, that may change if a certain bot is proving troublesome or if it’s necessary to alter the way that search engines engage with a particular WordPress theme or plugin or perhaps even depending upon your web host.